Introduction: The Rise of AI and the Age of Digital Trust

Artificial Intelligence (AI) has evolved from a futuristic concept into an everyday reality. From virtual assistants like Alexa to recommendation engines on Netflix and AI-driven chatbots in customer service, AI has changed how people interact with technology. But while this transformation brings convenience and personalisation, it also raises a critical question: How safe is the data powering these intelligent systems?

Every AI system depends on vast, sensitive, and deeply personal data. This data forms the foundation of machine learning algorithms that predict customer behaviour, personalise experiences, and automate decisions. However, when privacy is neglected, the same technology can lead to data misuse, identity theft, and loss of public trust.

As businesses adopt AI at scale, they must ensure transparency, consent, and accountability. Companies like Aidify Learning and Mobility are proving that innovation and privacy can coexist by designing AI systems that are powerful, secure, and ethically responsible, delivering a Secure Customer Experience built on trust and transparency.

- Everyday impact of AI

- Data privacy as a core concern

- Risk of misuse and lost trust

- Ethical and secure AI by Aidify Pvt Ltd

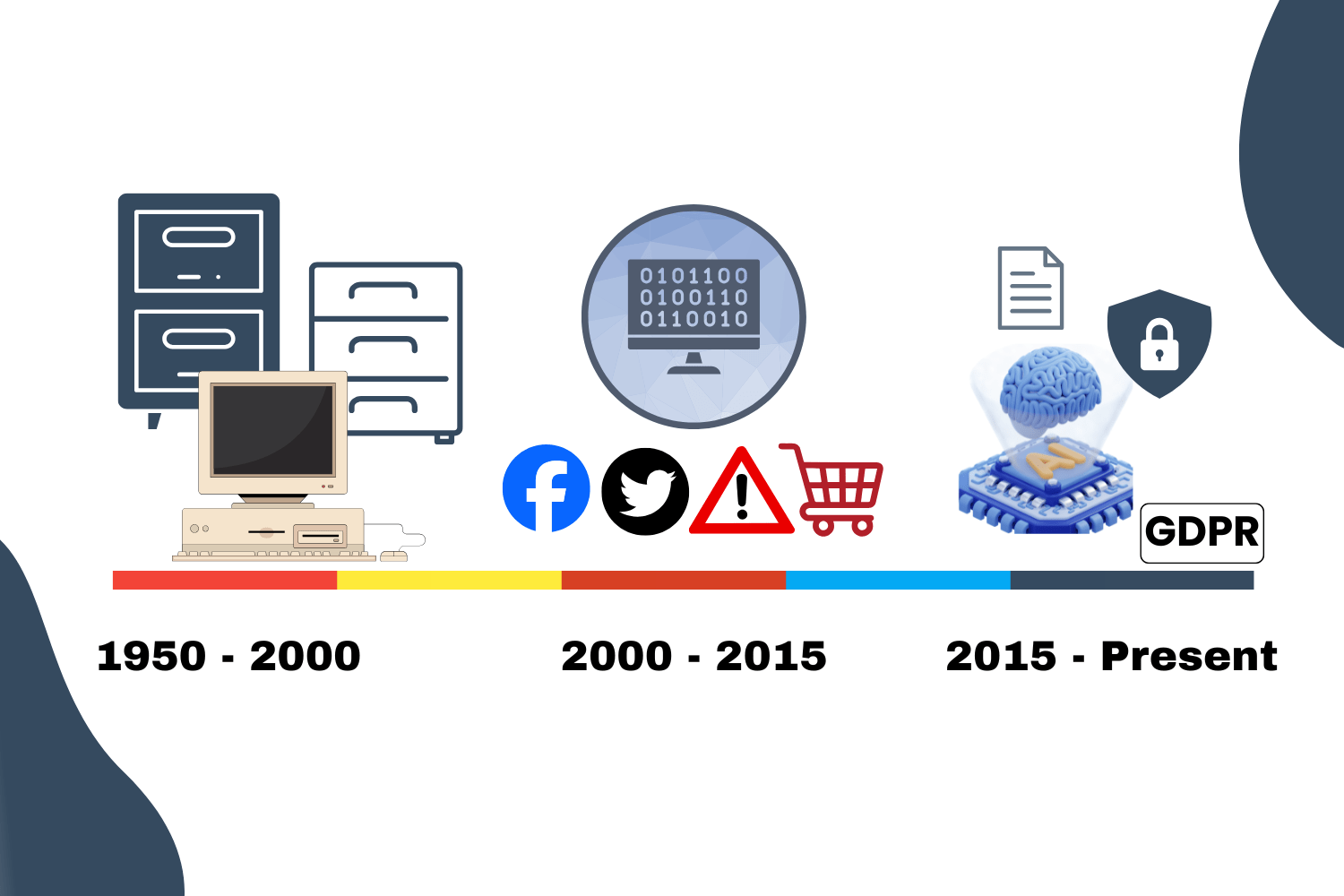

A Look Back: The Evolution of AI and Data Privacy

Early Days of Data and Automation (1950s–2000s)

In the early days of computing, data collection was minimal. Most systems were rule-based and required manual inputs. Privacy concerns were limited because digital footprints were small. With the rise of the internet in the 1990s, businesses began collecting user data for marketing and product improvement. Still, AI was in its infancy, and privacy laws were almost non-existent.

The Big Data Era (2000–2015)

As social media platforms, e-commerce, and mobile apps exploded, massive amounts of user data became available. AI systems started using this data for predictive analytics, recommending products, detecting fraud, and automating marketing campaigns. However, this era also saw a surge in data breaches and unauthorised tracking, prompting global debates about privacy. Scandals like Cambridge Analytica (2018) made consumers realise how vulnerable their data could be.

“As technology races ahead, the protection of privacy must accelerate with it.

AI should not only make decisions, but it should also make responsible decisions.

Earning trust is not optional; it’s the foundation of every customer relationship.”

— Brad Smith (President, Microsoft)

Modern AI and Privacy-Conscious Innovation (2015–Present)

Today’s AI systems are more advanced, capable of natural language processing, emotional intelligence, and autonomous decision-making. But they are also under constant scrutiny. Governments introduced laws like GDPR (Europe), CCPA (California), and DPDP Act (India) to regulate how companies use and protect data. Businesses must now design AI systems that are not only smart but also trustworthy. This is where ethical organisations like Aidify Learning and Mobility have taken the lead, embedding privacy-by-design frameworks into their AI architecture.

Core Pillars of AI and Data Privacy

To ensure that data-driven innovation remains ethical and sustainable, businesses must align their AI systems with core privacy principles. These principles form the moral and technical foundation of responsible AI, balancing innovation, protection, and user trust.

At Aidify Learning and Mobility, these five pillars are at the heart of every AI project we design, ensuring that technology respects people as much as it serves them. The foundation of a Secure Customer Experience in every digital interaction.

1.

Data Transparency – Building Clarity and Confidence

Transparency is the first step toward trust.

Organisations must clearly communicate what data is being collected, how it is used, and why it is necessary. This empowers users to make informed decisions about sharing their information.

Best Practices:

- Display concise privacy notices and consent forms.

- Provide real-time data usage updates within AI interfaces.

- Allow users to easily access and correct their information.

Aidify in Action:

Aidify’s AI systems include user transparency dashboards that show exactly how customer data contributes to personalised insights without exposing any personal identifiers. Every user interaction begins with a simple message.

2.

Security and Encryption – Safeguarding Digital Integrity

Security is the foundation of privacy. Without strong safeguards, even the most ethical AI system becomes vulnerable. AI models process sensitive information from financial data to behavioural patterns, making them prime targets for cyber threats.

Best Practices:

- Implement end-to-end encryption during data transmission.

- Use multi-factor authentication (MFA) and secure APIs for internal communication.

- Regularly conduct penetration testing and vulnerability scans.

Aidify in Action:

Aidify uses AES-256 encryption for all stored and transmitted data, and every AI module includes built-in anomaly detection to flag suspicious access attempts in real time. This ensures that both client and end-user data remain safe from breaches or leaks.

3.

Data Minimization – Collect Less, Achieve More

AI doesn’t need all data just the right data.

Collecting unnecessary information increases privacy risks and storage costs without improving accuracy. Data minimisation ensures AI systems use only what’s essential for a specific function or prediction.

Best Practices:

- Avoid collecting sensitive personal data unless absolutely required.

- Regularly review and purge outdated or redundant datasets.

- Adopt federated learning to analyse decentralised data without central storage.

Aidify in Action:

Aidify employs a “Purpose-Limited Data Collection” model. When building predictive systems for clients, only contextually relevant data (e.g., transaction history, not identity details) is processed. This approach reduces exposure while maintaining high model accuracy.

4.

User Empowerment – Giving People Control Over Their Data

The ultimate goal of AI ethics is empowerment, not control. When users can choose how their data is used and protected responsibly, they feel respected, valued and are more willing to trust and engage confidently with intelligent, transparent, and ethical AI-driven platforms.

Best Practices:

- Offer opt-in and opt-out choices for personalisation.

- Create self-service privacy dashboards where users can view, edit, or delete their information.

- Educate customers about their data rights and AI interactions.

Aidify in Action:

Aidify Learning and Mobility include built-in privacy control features that let users manage permissions directly. Whether it’s an AI chatbot or analytics dashboard, customers can instantly adjust settings, ensuring transparency and autonomy at every touchpoint.

5.

Accountability and Oversight – Governing AI Responsibly

AI systems must not operate in a vacuum. Ethical oversight ensures every automated decision aligns with fairness, legality, and organisational values. This pillar emphasises the need for human supervision, regular audits, and clear accountability frameworks.

Best Practices:

- Appoint a Data Protection Officer (DPO) or AI Ethics Lead.

- Conduct quarterly AI bias and compliance audits.

- Maintain logs of AI decisions and review them for fairness and accuracy.

Aidify in Action:

Aidify maintains an AI Governance Board that reviews every new deployment for bias, fairness, and compliance. Internal dashboards track decision-making transparency and generate audit-ready reports aligned with GDPR and India’s DPDP Act.

Building Customer Trust in AI-Driven Interactions

Trust is the most valuable currency in digital transformation. Customers will only engage deeply with brands that respect their privacy.

Ways to build trust include:

- Human-AI Transparency: Disclose when customers are interacting with an AI system.

- Consent-Based Communication: Always seek permission before storing or using customer information.

- Explainable AI: Provide reasoning behind AI-driven recommendations or decisions.

- Consistency Across Channels: Ensure privacy policies remain uniform across chatbots, websites, and mobile apps.

For instance, when a retail AI chatbot recommends a product, it should inform the user that the suggestion is based on their previous preferences, not hidden surveillance.

Educating and Empowering Customers

Even the best AI privacy policies are ineffective if customers don’t understand them. Education builds confidence and helps users make informed choices about their data. Companies can empower customers through awareness campaigns that explain how AI works in simple terms, privacy training that teaches safe data management practices, and feedback systems that allow users to report issues or concerns. When users feel informed and included, they shift from being passive data subjects to active digital participants, fostering a more transparent, secure, and cooperative AI ecosystem.

Ethical AI: From Automation to Accountability

AI should not only be intelligent but also moral. Ethical AI ensures fairness, responsibility, and humanity in every algorithmic decision. Key elements of ethical AI include bias detection systems to prevent unfair outcomes, explainability tools that provide clarity and ensure compliance, and ethical review boards that oversee AI deployment responsibly. Aidify Learning and Mobility upholds these principles by establishing an ethical AI committee that reviews all AI models for transparency, fairness, and social impact before deployment, ensuring technology serves people with integrity and accountability.

Data Protection: Safeguarding the Backbone of AI

AI models are only as reliable as the data they are trained on. Therefore, data integrity and security must be top priorities.

Effective practices include:

- Secure storage: Using cloud servers with strict encryption protocols.

- Access control: Limiting internal access based on employee roles.

- Regular audits: Identifying and resolving vulnerabilities in AI pipelines.

- Federated learning: Training AI locally on user devices so data never leaves the user’s environment.

Aidify Learning and Mobility utilises federated AI models that minimise central data collection, enhancing security while maintaining performance. These efforts ensure that businesses deliver a Secure Customer Experience every time users interact with AI-driven systems.

Real-World Impacts of Privacy-Focused AI

Companies that prioritise data privacy experience measurable and long-term benefits. These include increased customer retention and satisfaction, reduced legal and reputational risks, enhanced brand credibility, and stronger data-driven decision-making based on accurate, consented information. For instance, privacy-first AI platforms in the banking sector have reported up to a 30% increase in digital adoption, driven by customer confidence in secure and transparent interactions, all contributing to a truly Secure Customer Experience.

The Role of Global Regulations

Global privacy regulations have become the foundation for building responsible and trustworthy AI systems. Frameworks such as the GDPR (EU), which ensures data transparency and the right to be forgotten; the CCPA (USA), which gives consumers control over their personal data; and the DPDP Act (India), which emphasises consent, accountability, and secure data processing, collectively shape the ethical use of AI worldwide. By aligning with these laws, companies demonstrate their commitment to protecting consumer rights and fostering trust. Aidify Learning and Mobility ensures compliance with all major international frameworks, embedding legal readiness directly into its AI systems. This proactive approach not only guarantees compliance but also strengthens a Secure Customer Experience across every interaction.

How We Do AI and Data Privacy – Building Trust in AI-Driven Customer Interactions with AI Aidify

At Aidify Learning and Mobility, our goal is to ensure that every AI-driven customer interaction is intelligent, transparent, and privacy-focused. We follow a systematic approach that combines advanced technology with ethical responsibility.

1.

Privacy-by-Design Implementation

We do this by embedding privacy protection into every stage of AI system development. Instead of treating privacy as an afterthought, we build data protection measures right from the planning and architecture stage.

Example: When developing AI analytics tools, Aidify Learning and Mobility ensures all personal identifiers are anonymised or encrypted before any data is processed. This guarantees insights are accurate but non-invasive.

2.

Transparent AI Communication

We do that by ensuring every customer understands when and how AI is being used. Our systems are designed to explain decisions and recommendations clearly, removing the “black box” problem from AI interactions.

Example: If our chatbot suggests a service, it also provides context such as “This suggestion is based on your previous interactions,” making the AI experience open and trustworthy.

3.

Ethical AI Frameworks and Bias Control

We do this by embedding strong ethical governance into our AI lifecycle. Each AI model undergoes regular audits to detect bias, unfair treatment, or inaccurate predictions.

Example: Aidify Learning and Mobility uses multi-source datasets to train AI models, ensuring diverse representation and reducing the risk of discrimination. Any deviation triggers an internal review and retraining cycle.

4.

Advanced Data Security and Encryption

We do that by applying enterprise-grade security practices that safeguard sensitive information. All data handled by our AI systems is encrypted, access-controlled, and monitored in real time.

Example: In one CRM integration project, Aidify Learning and Mobility implemented dynamic data masking so employees could access only relevant data, protecting customer privacy while maintaining workflow efficiency.

5.

User Empowerment and Data Transparency

We do this by giving users control over their own information. Aidify Learning and Mobility builds user dashboards that allow individuals to view, edit, or delete their stored data whenever they choose.

Example: Customers can opt out of data collection or adjust personalisation settings directly, reinforcing autonomy and digital trust.

6.

Continuous Monitoring and Compliance

We do that by staying aligned with international data protection standards like GDPR and CCPA. Our compliance systems automatically flag potential data risks and ensure all AI activities meet global legal requirements.

Example: When handling cross-border projects, Aidify Learning and Mobility systems anonymise region-specific data to ensure compliance with local privacy laws.

7.

Education and Awareness Programs

We do this by educating businesses and users about AI ethics, security, and transparency. Aidify Learning and Mobility conducts workshops and creates awareness materials that explain how AI uses data and how customers can manage their privacy effectively.

Example: During corporate AI training sessions, we demonstrate how responsible data usage can improve both customer trust and brand credibility.

The Future: AI, Privacy, and Predictive Trust

The next evolution of AI will focus on predictive trust systems that not only protect privacy but also anticipate risks before they occur.

Future trends include:

- Emotion-aware AI that personalizes experiences without breaching boundaries.

- Zero-knowledge AI models that make predictions without storing user data.

- Blockchain-based consent tracking for complete transparency.

- Voice and biometric privacy technologies to prevent misuse of identity data.

As AI becomes more human-like, ethical and privacy-first design will define market leaders. Companies like Aidify Learning and Mobility are already investing in these forward-looking technologies, ensuring AI remains both innovative and responsible.

Conclusion

AI-driven interactions have redefined how businesses connect with customers faster, smarter, and more personalised than ever before. But true digital transformation goes beyond intelligence; it requires trust, transparency, and accountability. Building this trust isn’t a one-time task; it’s a continuous commitment to ethics, education, and evolution. By merging technological excellence with moral responsibility, Aidify Learning and Mobility exemplifies how organisations can shape a future where AI not only understands people but also respects them. When privacy and intelligence move hand in hand, the result isn’t just smart business, it’s trusted innovation, the ultimate hallmark of a Secure Customer Experience.